Current artificial intelligence systems operate on a rigid turn-based protocol that mirrors text messaging more than natural conversation.

Breaking the Turn-Taking Barrier

Thinking Machines has identified a fundamental limitation in how AI models currently process human interaction. Every commercial AI system today follows the same basic pattern: users speak or type their input, the model processes this information completely, then generates a full response before the cycle repeats. This creates an artificial pause between each exchange that doesn’t exist in human dialogue.

The company’s approach attempts to replicate the simultaneous processing that occurs during phone conversations. When humans talk, they continuously adjust their speech based on vocal cues, interruptions, and real-time feedback from their conversation partner. Current AI models cannot perform this type of concurrent processing.

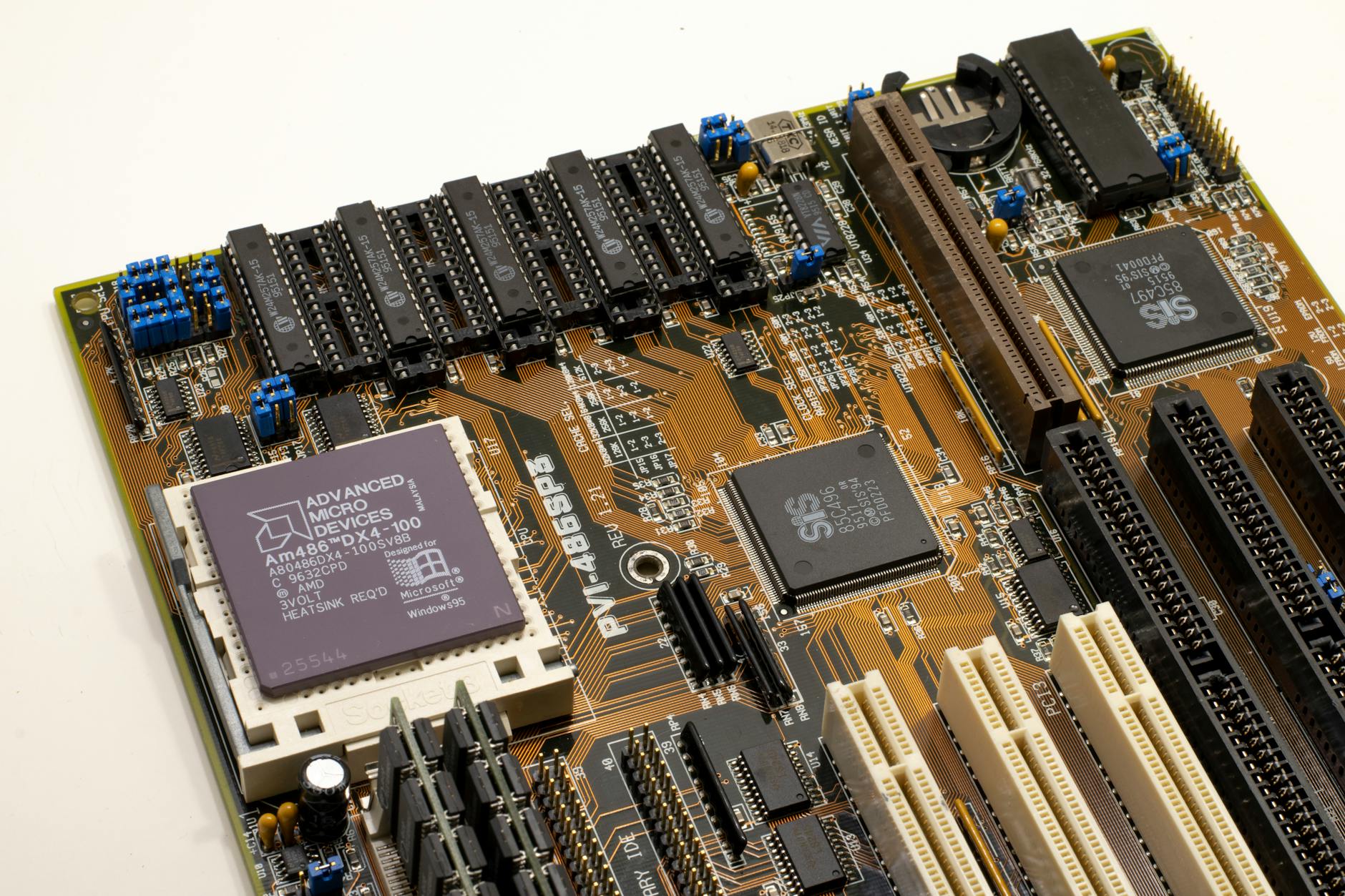

Traditional AI architecture requires complete input before any response generation begins. The model must finish “listening” before it can start “thinking” about its response. This sequential processing creates the characteristic delay users experience with chatbots and voice assistants.

Thinking Machines’ proposed system would fundamentally alter this architecture. Their model would begin processing and responding to input as it receives information, rather than waiting for complete sentences or thoughts. This represents a significant departure from established AI communication patterns.

Technical Challenges of Concurrent Processing

Building an AI system that can simultaneously listen and speak requires solving several complex technical problems. The model must maintain coherent responses while continuously updating its understanding based on incoming information. If a user changes direction mid-sentence or provides contradictory information, the AI needs to adjust its response in real-time without losing conversational thread.

Current transformer-based models process sequences linearly, making predictions based on complete input sequences. Modifying this architecture to handle streaming input while generating output demands new approaches to attention mechanisms and memory management. The model must track multiple simultaneous processes without degrading performance.

Interruption handling presents another significant challenge. Human conversations include frequent interruptions, clarifications, and course corrections that happen naturally. An AI system processing input and output simultaneously must determine when to pause its generation, incorporate new information, and resume speaking coherently.

The computational requirements for this type of processing may exceed current hardware capabilities. Running inference and training simultaneously while maintaining low latency for natural conversation flow could require specialized hardware configurations or novel optimization techniques.

Error correction becomes more complex when the model cannot revise its complete response before delivery. Unlike text-based systems where users can edit messages, spoken responses happen in real-time. The model must balance speed with accuracy while generating responses that cannot be easily corrected once spoken.

Market Implications for Conversational AI

Phone-like AI interaction could significantly change user expectations for digital assistants and customer service applications. Current AI systems feel mechanical partly because of their rigid turn-taking behavior, which differs markedly from human conversation patterns.

The success of such technology depends largely on whether users actually prefer more natural conversation flows over the predictable patterns they’ve grown accustomed to with existing AI systems. Some users might find interruptions and simultaneous processing more confusing than helpful, particularly in task-oriented interactions where clarity trumps naturalness.